Unified Generative AI API: Run LLMs with Real-Time Data and Actions Through One API

May 9, 2024

If your product integrates with large language models, you quickly run into provider-specific APIs, inconsistent request formats, and model-specific behavior.

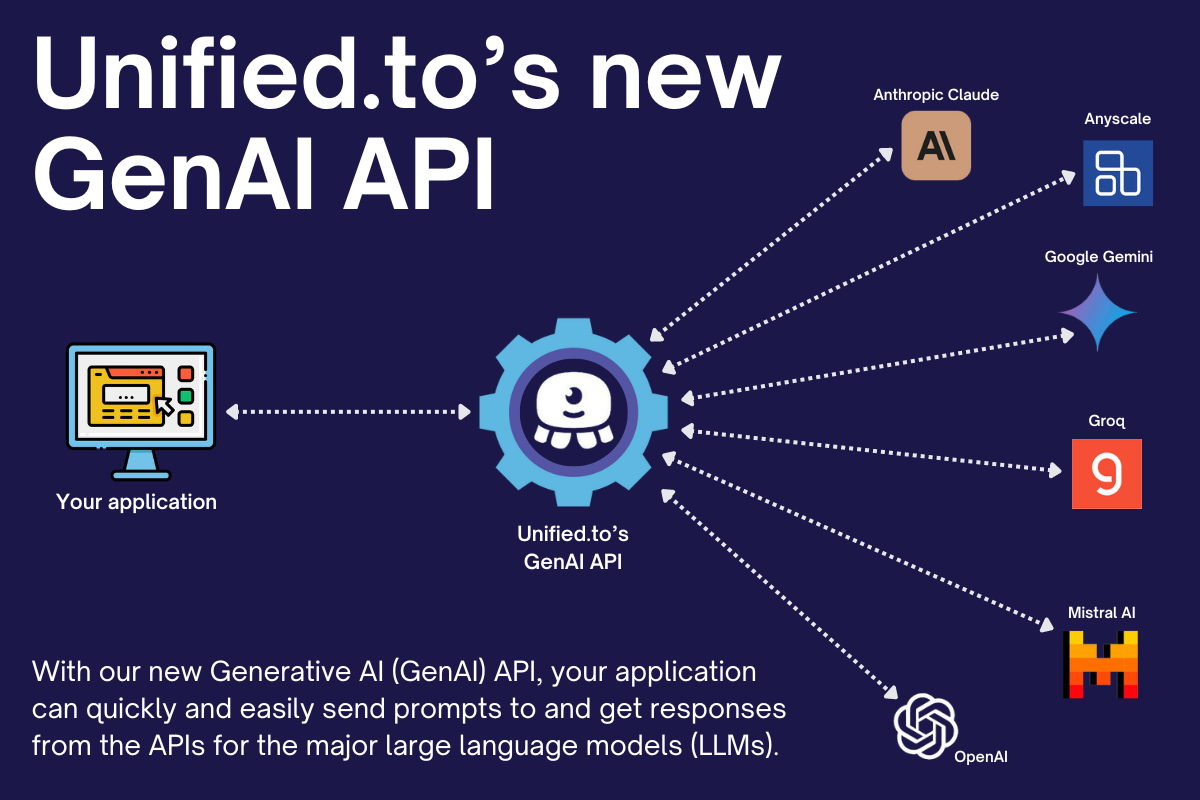

The Unified Generative AI API provides a single interface to send prompts and receive responses across multiple LLM providers—while combining those models with real-time data and actions across external systems. It acts as an execution layer for AI applications, where models, data, and system actions are handled through one API.

Supported providers

- Anthropic Claude

- Anyscale

- Azure OpenAI

- Cohere

- DeepSeek

- Google Gemini

- Groq

- Hugging Face

- Mistral AI

- OpenAI

- xAI (Grok)

Additional model providers are expanding as part of this category.

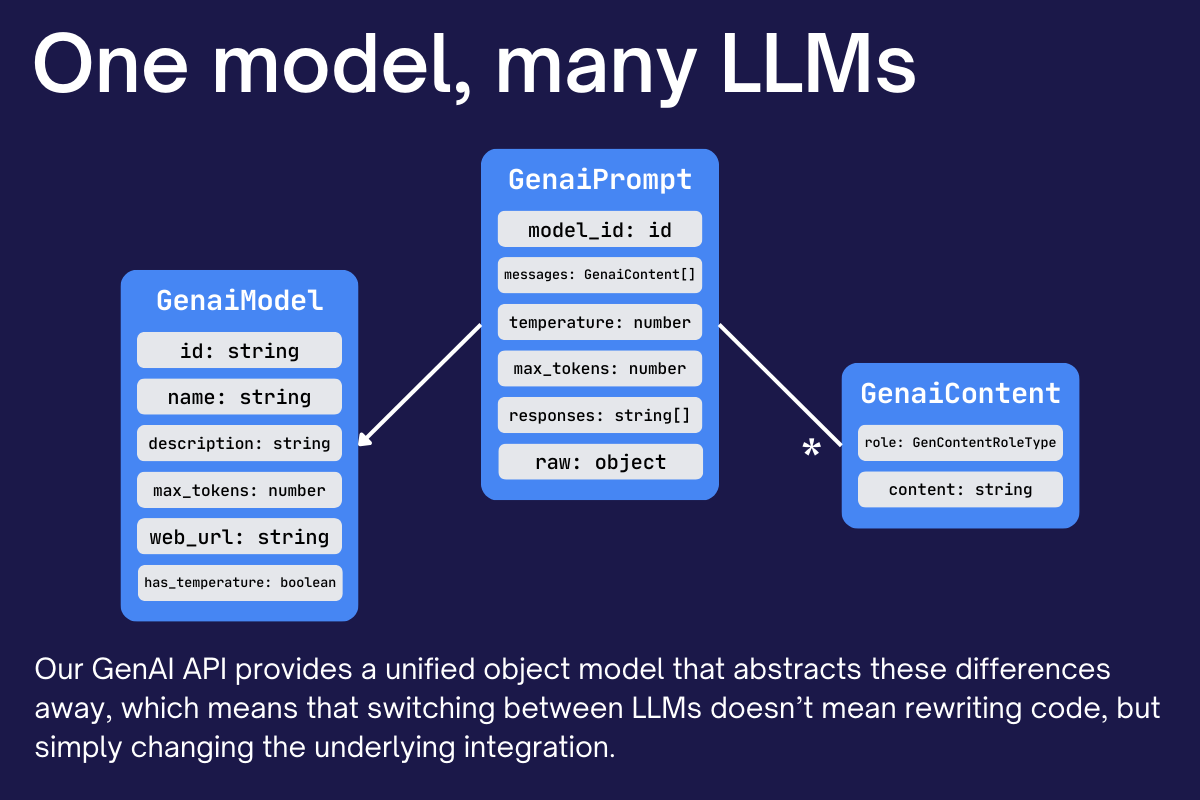

Unified model access across LLM providers

The Generative AI API standardizes interactions across models:

- Prompts follow a consistent request structure

- Responses are normalized across providers

- Model parameters (temperature, tokens, etc.) are handled consistently

This allows systems to switch between LLM providers without rewriting integration logic.

Core objects in the Generative AI API

- Models: available LLMs and their capabilities

- Prompts: requests sent to models, including messages and parameters

- Embeddings: vector representations for text and semantic search

These objects follow consistent schemas across providers, reducing the need to handle model-specific formats.

Real-time model execution

The Generative AI API executes requests in real time:

- Requests are routed directly to model providers

- No caching or stored prompt data

- Responses are returned immediately from the source model

This enables systems to dynamically select, route, and evaluate models at runtime.

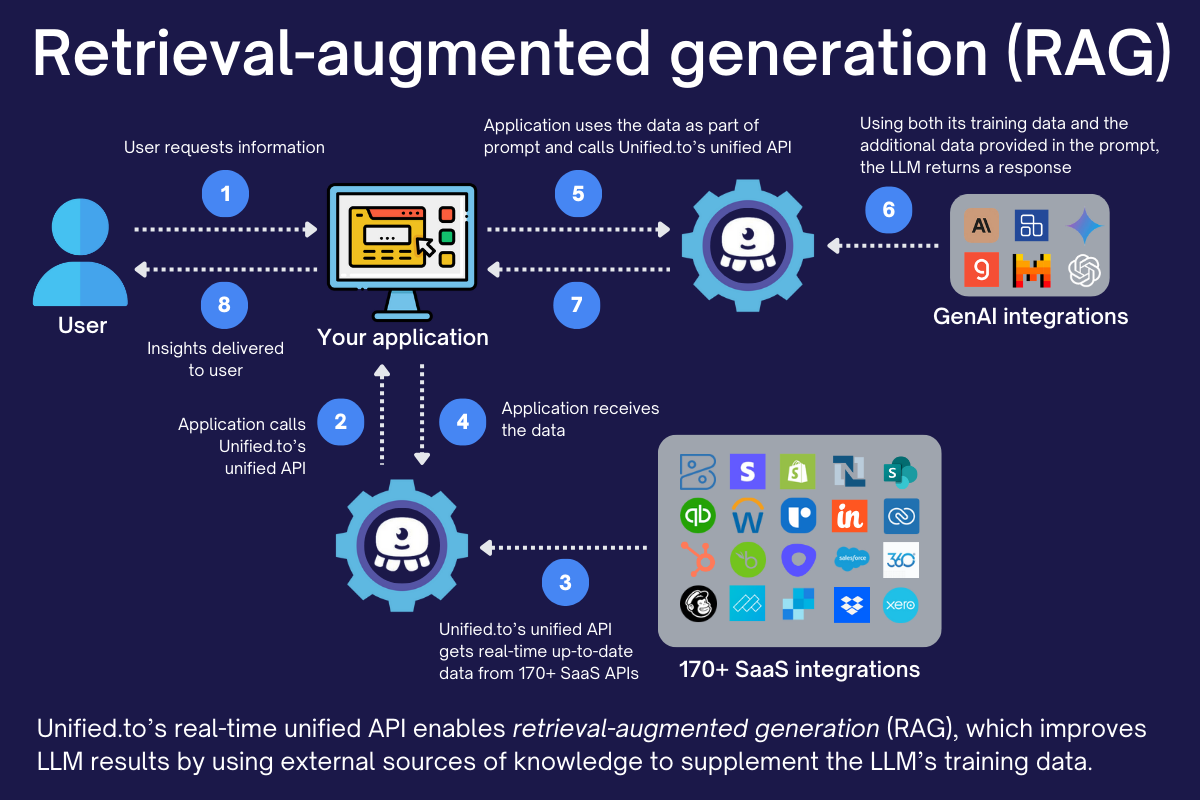

AI with real-time data and system actions

The Generative AI API connects LLMs with live data and external systems through Unified's integration layer.

This allows AI systems to:

- Retrieve real-time data from APIs as part of a retrieval-augmented generation (RAG) pipeline

- Generate responses based on current system state

- Trigger actions in external systems (create records, send messages, update data)

- Combine multiple data sources within a single request

Instead of operating on static inputs, models can reason over live data and take action in connected systems.

Retrieval-augmented generation (RAG) with real-time data

The Generative AI API supports retrieval-augmented generation (RAG) by allowing models to access live data from external systems at request time.

Instead of relying only on training data or static context, applications can:

- Retrieve relevant data from APIs before generating a response

- Provide structured, up-to-date context to the model

- Ground outputs in source systems with traceable data

Because data is fetched in real time, RAG pipelines operate on current system state rather than cached or outdated information.

This removes the need to build and maintain separate data pipelines for RAG—data can be retrieved, injected into prompts, and acted on within a single request.

What teams build with the Generative AI API

- AI copilots that operate on live business data

- Systems that route requests between models based on cost, latency, or performance

- Retrieval-augmented generation (RAG) pipelines using real-time data

- AI agents that take actions across APIs

- Embedding pipelines for search, classification, and semantic analysis

Who this is for

- AI product teams building copilots or assistants

- Platforms integrating multiple LLM providers

- Systems requiring model routing or fallback logic

- Applications combining AI with operational data

- Products building agent-based workflows across APIs

What is a generative AI API?

A generative AI API allows developers to send prompts to large language models and receive generated responses. A unified generative AI API standardizes how models are accessed across providers—and when combined with real-time data and system actions, enables AI systems to operate on live context and execute tasks across external platforms.

Get started

The Generative AI API is available on all Unified plans.